机器学习之梯度下降法

AI 摘要用直线拟合案例讲清梯度下降法的目标、过程与背后的直觉。

背景

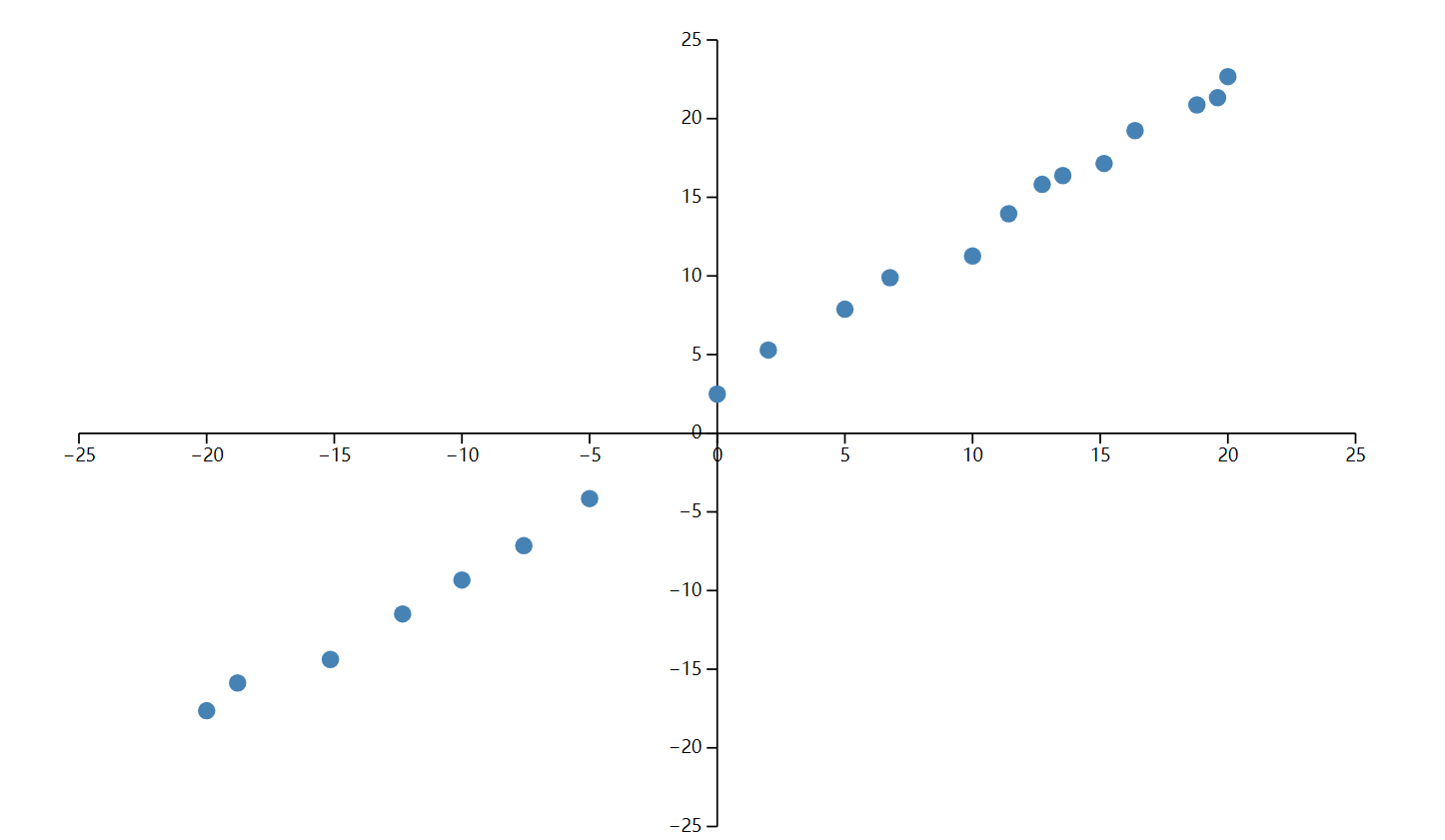

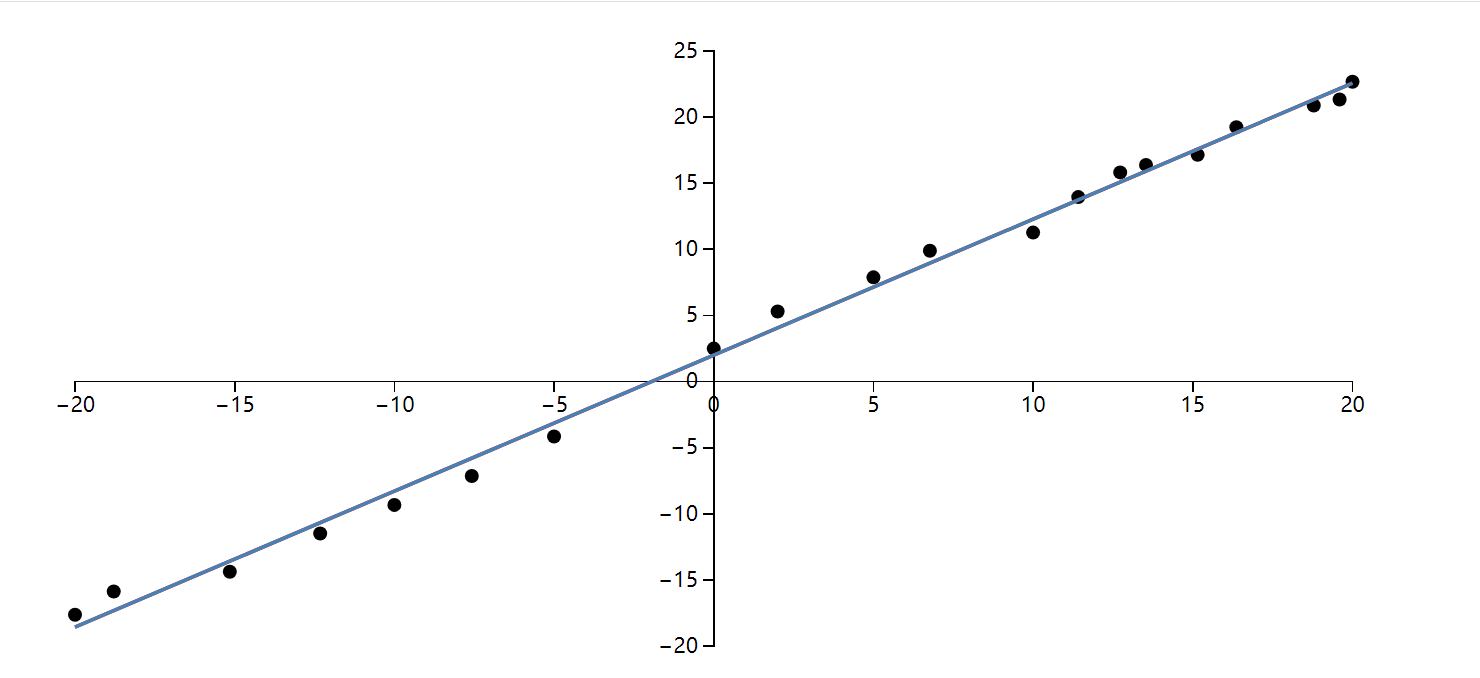

我把梯度下降法放到了一道题目中:给出了 20 个点,用一条直线拟合这些点。

const data = [

{ x: -20.000, y: -17.641 },

{ x: -15.152, y: -14.381 },

{ x: -10.000, y: -9.327 },

{ x: -5.000, y: -4.154 },

{ x: 0.000, y: 2.493 },

{ x: 5.000, y: 7.882 },

{ x: 10.000, y: 11.264 },

{ x: 12.727, y: 15.819 },

{ x: 15.152, y: 17.147 },

{ x: 18.787, y: 20.874 },

{ x: 20.000, y: 22.672 },

{ x: -18.787, y: -15.874 },

{ x: -12.323, y: -11.486 },

{ x: -7.576, y: -7.148 },

{ x: 2.000, y: 5.293 },

{ x: 6.768, y: 9.888 },

{ x: 11.414, y: 13.952 },

{ x: 13.535, y: 16.384 },

{ x: 16.364, y: 19.241 },

{ x: 19.596, y: 21.328 }

];思路

我们先用 d3 把这些点画到出来,聪明的你一眼就看出来大致是: y = x + 2 ,但是怎么写出代码呢?

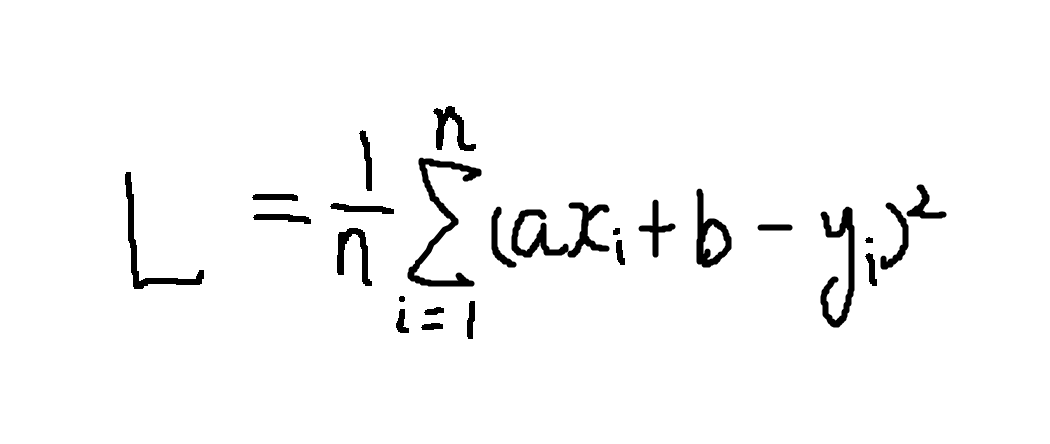

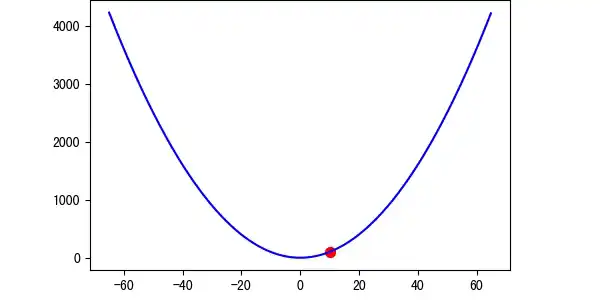

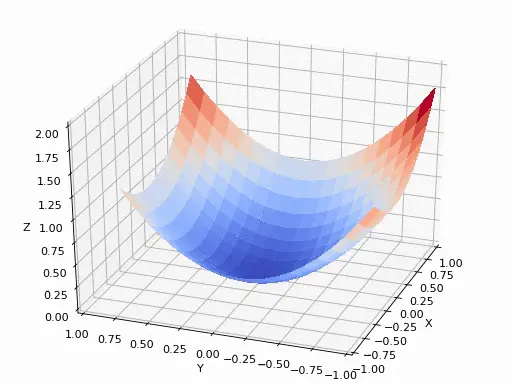

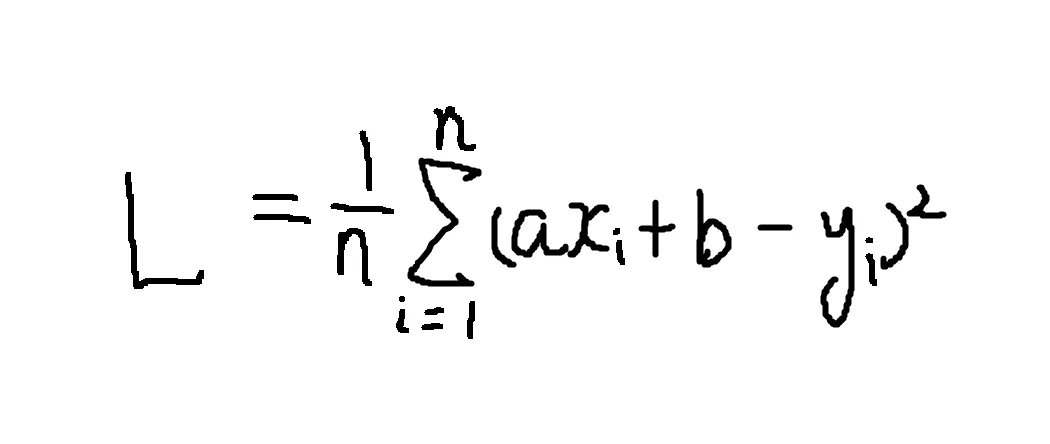

我们知道,直线的方程是 y = ax + b,我们可以不断的调度 a 和 b 参数以使效果达到更好。 那怎么衡量我们工作的好坏呢?即怎么确定 a = 1、b = 2 的效果比 a = 2、b = 2 的效果更好呢? 我们可以量化到一个公式里,然后把所有的数据代入进去,如果 a = 1、b = 2 的效果比 a = 2、b = 2 算出来的数据更小一点,那么就证明拟合效果更好。

梯度下降法

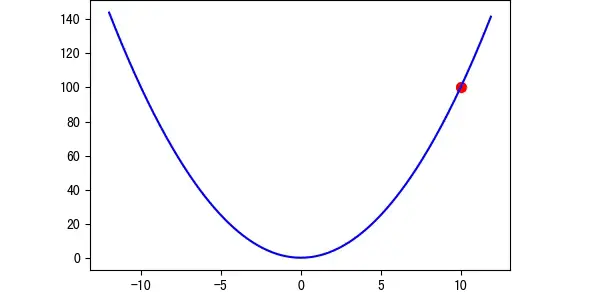

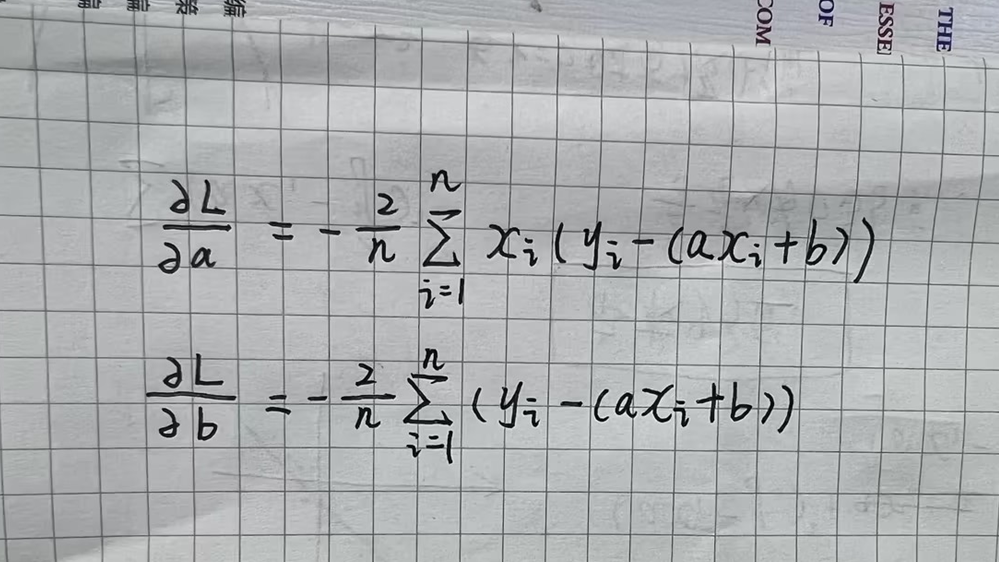

我们引入梯度下降法下求解。如果不直接套公式,计算机的强大在于可以暴力求解,比如任意找一个点,然后每次增加一点,或者减少一点,最后求值对比。这种求解起来效率太低了,如果有多个参数,时间复杂度太高。 我们可以从极限的角度来看这个问题,对于可导的区线,越接近极小值,导数越小。 基于此,我们可以在暴力求解的基础上,将步长乘上它在这一点的导数(-step * dy),这样就可以在离极小值点远的时候,走的更快!

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Linear Regression with D3.js</title>

<script src="https://d3js.org/d3.v7.min.js"></script>

<style>

.axis path,

.axis line {

fill: none;

shape-rendering: crispEdges;

}

.axis text {

font-size: 12px;

}

</style>

</head>

<body>

<div id="chart"></div>

<script>

const data = [

{ x: -20.0, y: -17.641 },

{ x: -15.152, y: -14.381 },

{ x: -10.0, y: -9.327 },

{ x: -5.0, y: -4.154 },

{ x: 0.0, y: 2.493 },

{ x: 5.0, y: 7.882 },

{ x: 10.0, y: 11.264 },

{ x: 12.727, y: 15.819 },

{ x: 15.152, y: 17.147 },

{ x: 18.787, y: 20.874 },

{ x: 20.0, y: 22.672 },

{ x: -18.787, y: -15.874 },

{ x: -12.323, y: -11.486 },

{ x: -7.576, y: -7.148 },

{ x: 2.0, y: 5.293 },

{ x: 6.768, y: 9.888 },

{ x: 11.414, y: 13.952 },

{ x: 13.535, y: 16.384 },

{ x: 16.364, y: 19.241 },

{ x: 19.596, y: 21.328 }

];

// Initialize parameters

let a = 0;

let b = 0;

const learningRate = 0.001;

const iterations = 10000;

const n = data.length;

// Compute gradients

function computeGradients(a, b) {

let da = 0;

let db = 0;

for (let i = 0; i < n; i++) {

const x = data[i].x;

const y = data[i].y;

const y_pred = a * x + b;

da += -2 * x * (y - y_pred);

db += -2 * (y - y_pred);

}

da /= n;

db /= n;

return { da, db };

}

// 每次算出梯度,然后相减

function gradientDescent() {

for (let i = 0; i < iterations; i++) {

const gradients = computeGradients(a, b);

a -= learningRate * gradients.da;

b -= learningRate * gradients.db;

}

}

// Run gradient descent

gradientDescent();

// D3.js Visualization

const margin = { top: 20, right: 30, bottom: 40, left: 40 };

const width = 800 - margin.left - margin.right;

const height = 400 - margin.top - margin.bottom;

const svg = d3.select("#chart")

.append("svg")

.attr("width", width + margin.left + margin.right)

.attr("height", height + margin.top + margin.bottom)

.append("g")

.attr("transform", `translate(${margin.left},${margin.top})`);

// Set the scales so that (0,0) is at the center of the chart

const x = d3.scaleLinear()

.domain([-20, 20]) // Set the x domain to be symmetrical around 0

.range([0, width]);

const y = d3.scaleLinear()

.domain([-20, 25]) // Set the y domain to accommodate data points

.range([height, 0]);

// Draw X and Y axes

svg.append("g")

.attr("class", "x axis")

.attr("transform", `translate(0,${y(0)})`) // Set y(0) to place X axis at y = 0

.call(d3.axisBottom(x));

svg.append("g")

.attr("class", "y axis")

.attr("transform", `translate(${x(0)},0)`) // Set x(0) to place Y axis at x = 0

.call(d3.axisLeft(y));

// Draw Data Points

svg.selectAll(".dot")

.data(data)

.enter().append("circle")

.attr("class", "dot")

.attr("cx", d => x(d.x))

.attr("cy", d => y(d.y))

.attr("r", 4);

// Draw Regression Line

const line = d3.line()

.x(d => x(d.x))

.y(d => y(a * d.x + b));

svg.append("path")

.datum(data)

.attr("class", "line")

.attr("fill", "none")

.attr("stroke", "steelblue")

.attr("stroke-width", 2)

.attr("d", line);

</script>

</body>

</html>这里有一个比较有挑战性的任务,现在给出的数据比较少,如果数据大,或者有多层神经网络,直接这么写会带来性能问题。其实我们可以看到,我们本质是在求多个性线方程组,我们的所有计算都可以转成线性代数,然后使用前面的 GPU.js 来计算。 最后得到的效果:

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

# 原始数据点

data = [

{"x": -20.0, "y": -17.641}, {"x": -15.152, "y": -14.381},

{"x": -10.0, "y": -9.327}, {"x": -5.0, "y": -4.154},

{"x": 0.0, "y": 2.493}, {"x": 5.0, "y": 7.882},

{"x": 10.0, "y": 11.264}, {"x": 12.727, "y": 15.819},

{"x": 15.152, "y": 17.147}, {"x": 18.787, "y": 20.874},

{"x": 20.0, "y": 22.672}, {"x": -18.787, "y": -15.874},

{"x": -12.323, "y": -11.486}, {"x": -7.576, "y": -7.148},

{"x": 2.0, "y": 5.293}, {"x": 6.768, "y": 9.888},

{"x": 11.414, "y": 13.952}, {"x": 13.535, "y": 16.384},

{"x": 16.364, "y": 19.241}, {"x": 19.596, "y": 21.328}

]

# 转换为PyTorch张量

x_data = torch.tensor([[d["x"]] for d in data], dtype=torch.float32)

y_data = torch.tensor([[d["y"]] for d in data], dtype=torch.float32)

class LinearRegression(nn.Module):

def __init__(self):

super().__init__()

self.linear = nn.Linear(1, 1) # 单输入单输出

def forward(self, x):

return self.linear(x)

# 初始化模型

model = LinearRegression()

# 损失函数和优化器

criterion = nn.MSELoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.001)

# 训练参数

epochs = 10000

loss_history = []

# 训练循环

for epoch in range(epochs):

# 前向传播

outputs = model(x_data)

loss = criterion(outputs, y_data)

# 反向传播和优化

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 记录损失

loss_history.append(loss.item())

# 每1000次打印进度

if (epoch+1) % 1000 == 0:

print(f'Epoch [{epoch+1}/{epochs}], Loss: {loss.item():.4f}')

# 获取训练后的参数

a = model.linear.weight.item()

b = model.linear.bias.item()

print(f'\n训练完成: y = {a:.4f}x + {b:.4f}')

plt.rcParams["font.family"]=['SimHei']

plt.rcParams['font.sans-serif']=['SimHei']

plt.rcParams['axes.unicode_minus']=False

# 可视化结果

plt.figure(figsize=(12, 6))

# 绘制原始数据点

plt.subplot(1, 2, 1)

plt.scatter(x_data.numpy(), y_data.numpy(), color='blue', label='原始数据')

plt.plot([-20, 20], [a*(-20)+b, a*20+b], 'r-', lw=2,

label=f'y = {a:.4f}x + {b:.4f}')

plt.title('线性回归拟合结果')

plt.xlabel('x')

plt.ylabel('y')

plt.grid(True, linestyle='--', alpha=0.7)

plt.axhline(0, color='black', linewidth=0.5)

plt.axvline(0, color='black', linewidth=0.5)

plt.legend()

plt.axis([-22, 22, -25, 25])

# 绘制损失曲线

plt.subplot(1, 2, 2)

plt.plot(loss_history, color='green')

plt.title('训练损失变化')

plt.xlabel('迭代次数')

plt.ylabel('MSE损失')

plt.grid(True, linestyle='--', alpha=0.5)

plt.yscale('log') # 对数坐标更易观察

plt.tight_layout()

plt.show()

# 输出最终参数

print(f"斜率 a: {a:.4f}")

print(f"截距 b: {b:.4f}")